Sounds great: Understanding the challenges of height channels in broadcast audio

Hyunkook Lee’s PCMA-3D array being tested under Manhattan Bridge, New York, US

Height channels in broadcast audio present a unique challenge. Hyunkook Lee, Associate Professor in Music Technology at the University of Huddersfield, UK, as well as director of the Centre for Audio and Psychoacoustic Engineering (CAPE) and founder and leader of the Applied Psychoacoustics Laboratory, has more insight than most into this subject, and recently shared his expertise at SVG Europe’s Next Generation Audio Summit, produced in association with Dolby.

Few would deny that the perception of height in sound is a key factor in the stadium experience for sports fans. Crowd noise from all around makes for an exhilarating, immersive ride through to the final whistle or the closing ceremony, and the mega-stadium experience is absolutely defined by that aural onslaught; particularly the height.

To capture that and broadcast it in all its glory is surely a pursuit and prize worth their weight in viewers?

Crucial consideration of height

It is only right that the development of object-based audio and ever-expanding channel counts has brought the crucial consideration of height into the spotlight for many sports broadcasters. The difficulty – and the convenience – is that height perception does not play by the same rules as surround perception and that has a knock-on effect on how we should be deploying microphones at sports events.

The word ‘convenience’ is added because, as it turns out, the way we perceive height plays nicely into solving the problem of cumbersome arrays and can lead to a dramatic spatial experience that many feel is lacking from ambisonic or purely coincident solutions.

Lee’s work, along with others, on psychoacoustic perception of height information has reached practical implementation at some of the world’s biggest international sports events and shows that this kind of research is just as valuable to commercial broadcast as ever.

“We start with the principles behind 3D audio perception,” notes Lee. “From there we can get to the important question of how we should capture the sound field for the height dimension; how we create phantom imagery between the main layer and the height layer. We need to ask ‘how do we localise sound vertically?’ and ‘how do we perceive spaciousness vertically?’.”

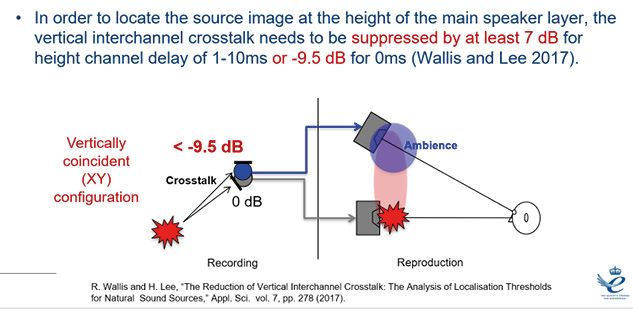

Lee’s work with R Wallis in 2017 highlighted the importance of reducing ‘vertical interchannel crosstalk’ (the amount of direct sound in the height channels) to height perception

The parameters that determine normal ear-level spatial perception are interaural time, level and cross correlation (ITD, ILD and IACC), as well as the head related transfer function (HRTF), which helps resolve sources where the ITD and ILD are the same.

It makes sense, for example, that a sound arriving in the right ear before it arrives in the left ear is coming from the right, and the same with level differences. It is the significant role of level difference that makes amplitude panning so effective for stereo and surround sound, and it is both time delay and level difference that make spaced microphone arrays so effective at conveying spaciousness.

Following this through, we might come to an array with height microphones spaced vertically; the four corners as top corners of a cuboid. This makes for an unwieldy arrangement though and one definitely not suited to the sports broadcast arena.

In addition, it turns out that the height of a height-channel microphone in a spaced array has limited impact on the impression of height in a recording.

Height perception challenges

Early experiments showed that frequency has a big impact on height perception, with higher frequencies being perceived as sources from higher-up. But that is not the whole story. Lee’s psychoacoustic experiments have confirmed that height delay (interchannel time difference, ICTD) is not at all effective in conveying height information and that amplitude and level panning is not great either; it works to an extent, but the resolution is poor, reduced to three main angles of perceived elevation.

If you can use a normal spaced surround array with coincident height microphones and reduce the crosstalk with the surround mics, you can significantly improve the perceived height of whatever you are trying to capture and get rid of the comb filtering

For sports recordings, the news is worse still; the vertical cross talk that results from spaced-height microphones, while possibly desirable in music recordings, can be detrimental to crowd noise, with audible comb filtering.

Lee’s work with R Wallis in 2017 highlighted the importance of reducing ‘vertical interchannel crosstalk’ (the amount of direct sound in the height channels) to height perception. They concluded that listeners can locate source image height where that crosstalk is reduced sufficiently.

With a height channel delay of one millisecond to 10 milliseconds (spaced height microphones), reduction of interchannel crosstalk by -7 decibels does the trick, and for coincident height microphones (zero millisecond delay) it needs to be at least -9.5 decibels.

This is important because it implies that if you can use a normal spaced surround array with coincident height microphones and reduce the crosstalk with the surround mics, you can significantly improve the perceived height of whatever you are trying to capture and get rid of the comb filtering. And, as a bonus, it survives downmix and binaural encoding very well too.

Crosstalk reduction

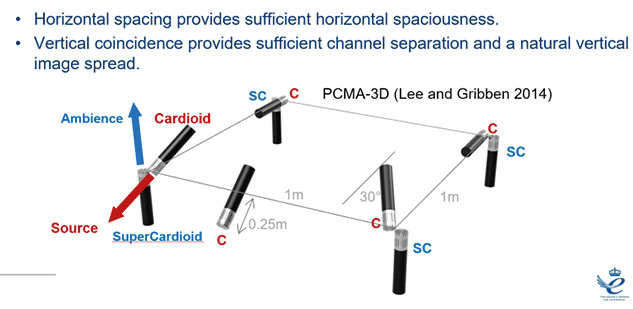

In practice, Lee has achieved the crosstalk reduction by using supercardioid height mics with cardioid surround mics and says others have done similar with baffles and so on. The results are fantastic.

The Schoeps ORTF-3D array was based on some of this work and was used very effectively at the FIFA World Cup in Russia by Felix Krückels. Krückels is a sports sound mixer and Professor of Broadcast Production and System Design at the Media campus of the University of Applied Sciences, Darmstadt-Dieburg, Germany.

The Schoeps ORTF-3D array was used very effectively at the FIFA World Cup in Russia by Felix Krückels, sports sound mixer and Professor of Broadcast Production and System Design at the Media campus of the University of Applied Sciences, Darmstadt-Dieburg, Germany

Krückels notes that the Schoeps array “provides a far better sense of envelopment and a more accurate localisation as well as a larger listening area, which is of paramount importance for TV viewers”.

He goes on: “ORTF-3D was one of the most important contributors for the successful audio production of the TV coverage (or alike). It played a major role in delivering excellent immersiveness and precise localisation for crowd sounds in 3D (eg, England on the left stand and Germany on the right stand), which would have not been possible with a conventional microphone array like First-Order Ambisonics.”

One of the interesting things to be highlighted in conversation with Lee was the mismatch between the expectations we sometimes have of height information and the reality. “We don’t localise very well from above,” he explains. “And also because of the pitch-height effect we don’t perceive height very well in the real world either. We normally perceive bird sounds, for instance, lower than the source is positioned in reality.”

However, this doesn’t mean that multiple height channels do not have a part to play in immersion. Lee adds: “The front height ambience tends to give us more depth and openness, while the rear height channels help more with overall envelopment.”

So, multiple height channels can be a major factor in immersion. However, some may not yet have fully realised the potential simply through not playing to the strengths, and weaknesses, of human perception.